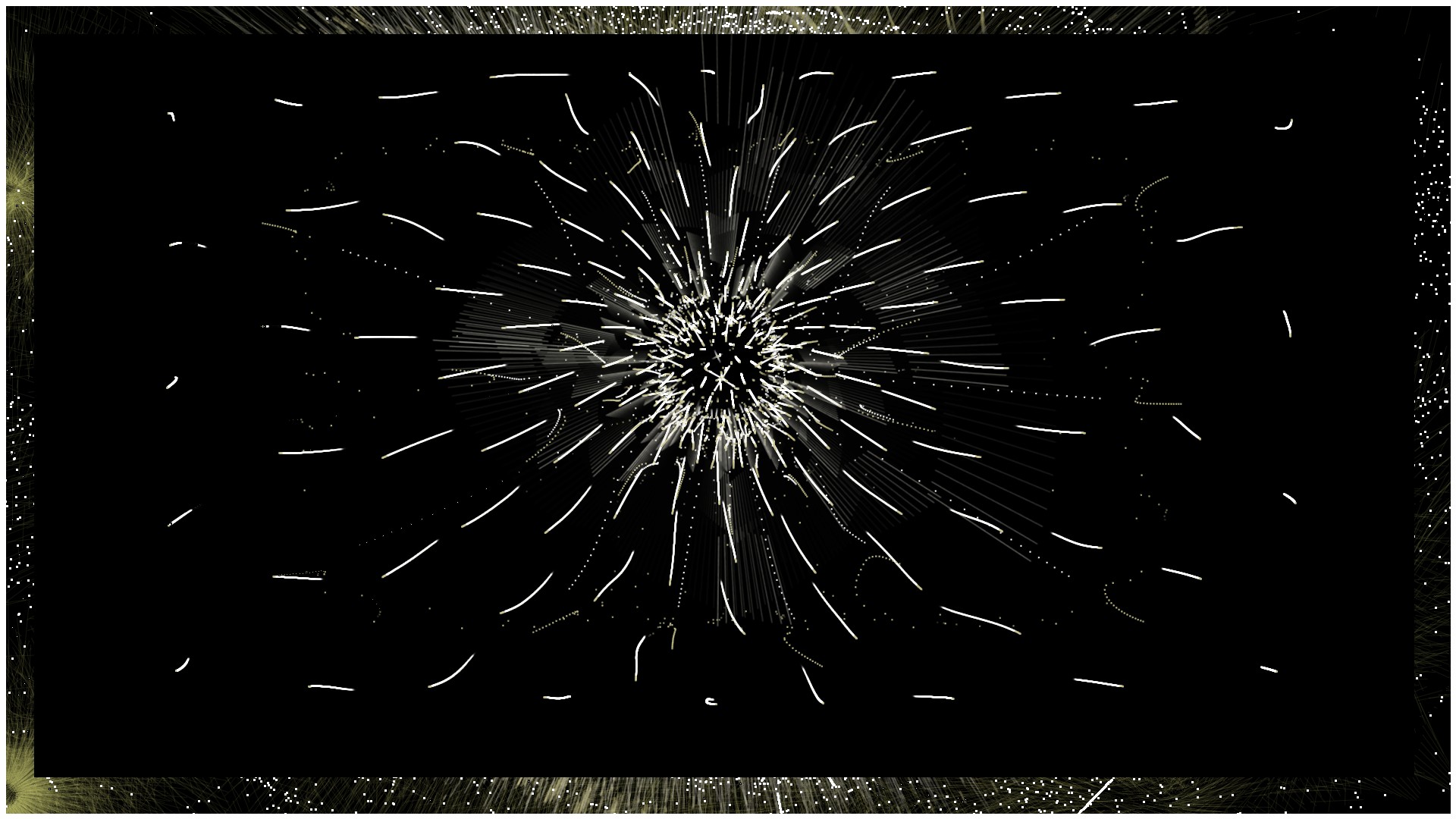

Dimension N (2.0) is a digital audio-visual performance by the duo Alba G. Corral (graphic/ digital artist from Barcelona) & Dariusz Makaruk (producer / composer of contemporary music and electronic systems from Warsaw). They combine generative systems with improvised digital drawing techniques. Dimension N makes the audience dive in different immersive landscapes and digital paintings mixed with a virtuoso electronic music close to the approach of North American minimalists.

The effect of this meeting is fascinating impact of two artistic impressions in a way of multidimensional audiovisual live performance.

The project was presented at:

– Madatac Festival 2017, Madrid

– PATCHlab Festival, Cracow 2015 – Manggha Museum of Japanese Art and Technology – Festival des Bains Numeriques 2016, Centre des Arts Enghien les Bains, France

– CitySonic – Festival des Arts Sonores 2016, Belgium

– Live Cinema Festival (AV Node) 2016, Rome, Italy, Museo d’Arte Contemporanea di Roma at Via Nizza

Dimension N project is coproduced by Centre des arts d’Enghien-les-Bains, and is the winner of the Grand Prize of the international competition of the BAINS NUMERIQUES#9 Biennale 2016.

Dimension N 2.0 is co-produced with Transcultures, an MAP program initiated by the Pépinières européennes pour jeunes artistes, with the support of Ministry of Culture of the Federation Wallonia-Brussels, Belgium.

Dimension N project aims to explore the potential of live audiovisual performance on stage and various interactive relations it implies: the relation between the improvisation and pre-composed content, between music and video, between performers and the audience. How they influence one another and inspire to bend or break the rules. The direction is to dive into the present moment as much as possible while not forgetting its timeless nature (hence the genesis of the name for the project – Dimension N – “N” for infinity. It is asking the question whether our concepts about “dimensions” we live in – including time – are fixed and pre-composed, or can they be reinvented by experiencing the present and improvising with new perspectives.

The form of the project is created through sound-reactive and generative visual content created by custom software written in Processing as well as by the interactive system of mutually controlling sound by video and video by sound through OSC protocol, written in Processing and Max/MSP Jitter. The system itself is a tool or rather a new kind of audiovisual instrument, which allows for both live exploration of new worlds as well as reproducing previously composed ideas.

The evolution of the project aims to further explore of the possibilities of this new system, to develop it even more allowing to create more immersive sound-and-videoscapes as well as to compose new audiovisual pieces to be used in future performances. It also intends to research the relationship between self-generated content and new ways of controlling it.

COMMENTS (1)

Xavier Montojo

March 15, 2017 , 3:25 pm